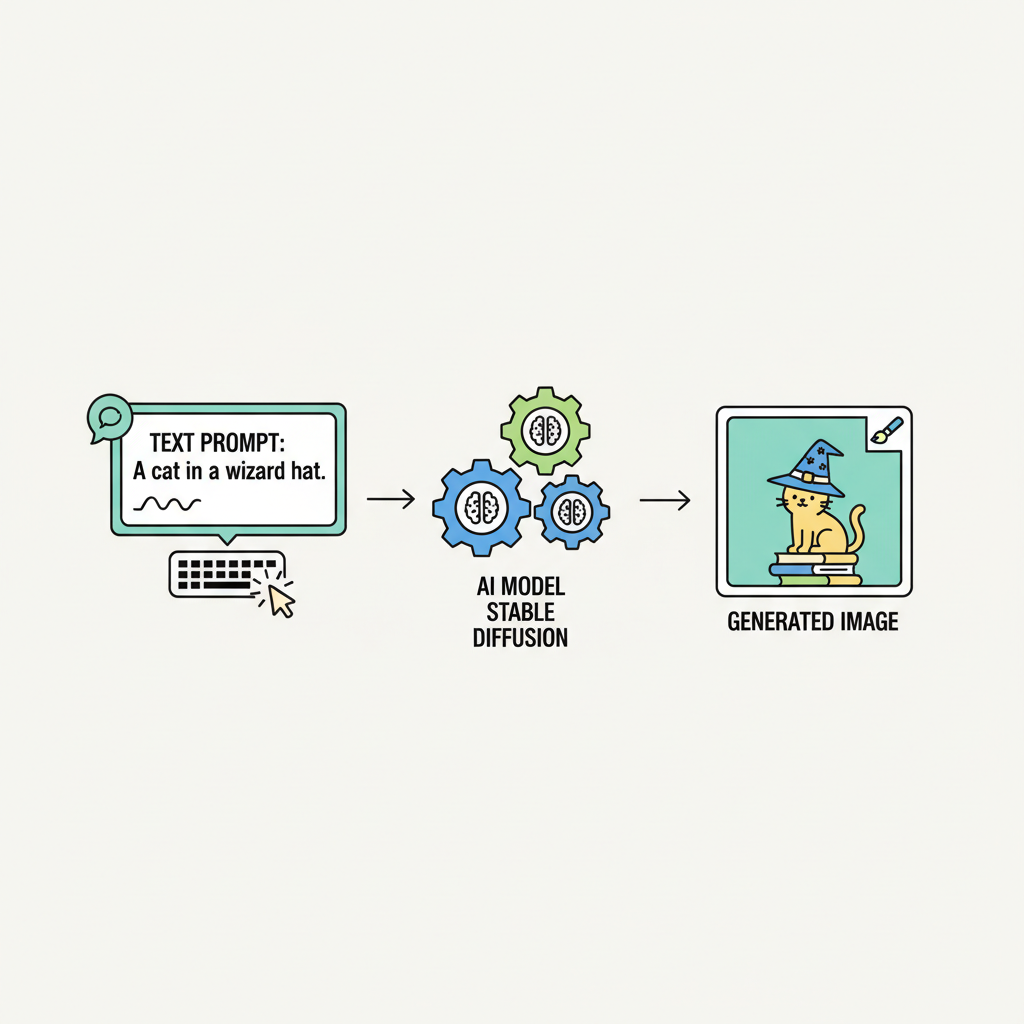

In the surging world of AI inference, where every token counts, traditional subscription models and API keys are buckling under the weight of unpredictable usage. Enter x402, a protocol that resurrects the long-dormant HTTP 402 status code to enable true pay-per-inference billing. Developers can now build AI APIs that charge precisely for compute, fostering an ecosystem where AI agents pay autonomously onchain without friction. This isn't just a payment tweak; it's a foundational shift toward machine-native economics, especially with x402 v2's enhancements making it primed for high-volume AI workloads.

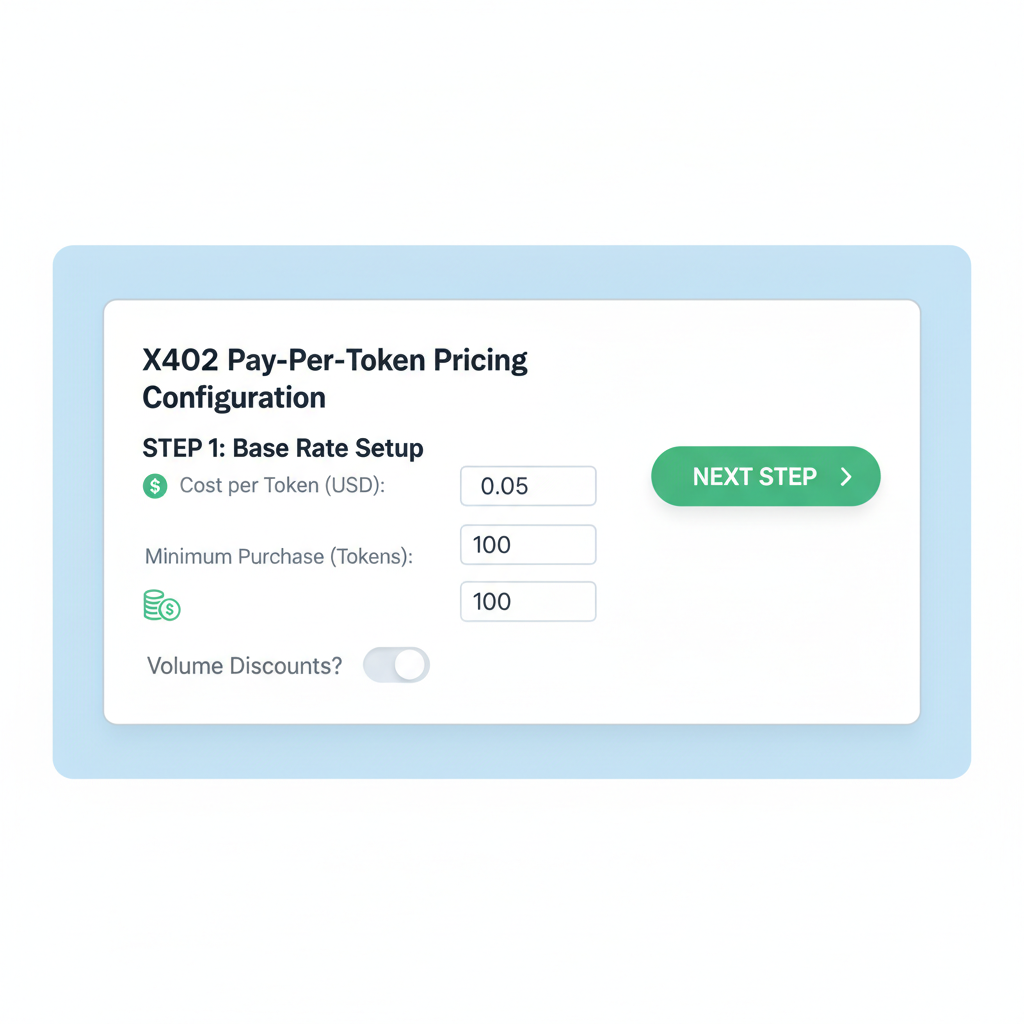

x402 v2, launched recently, standardizes payment formats across chains and even legacy systems, introducing dynamic 'payTo' routing. This means your pay per inference SDK can handle diverse assets seamlessly, from Solana to Base, without code overhauls. Extensible architecture lets you plug in custom logic via lifecycle hooks, while wallet-based sessions and automatic discovery keep metadata fresh. For AI devs, this translates to scalable micropayments that match inference costs down to the millicent.

Unlocking Autonomous AI Agents with x402

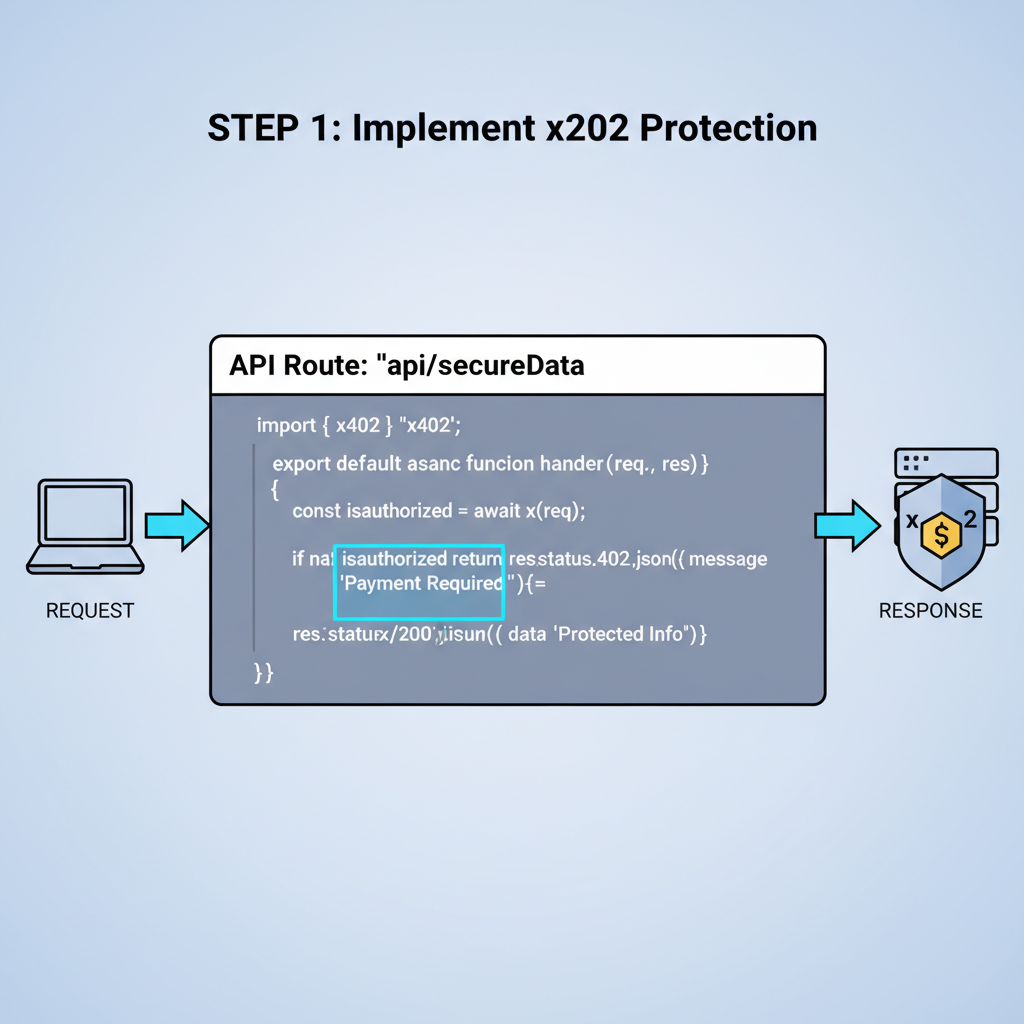

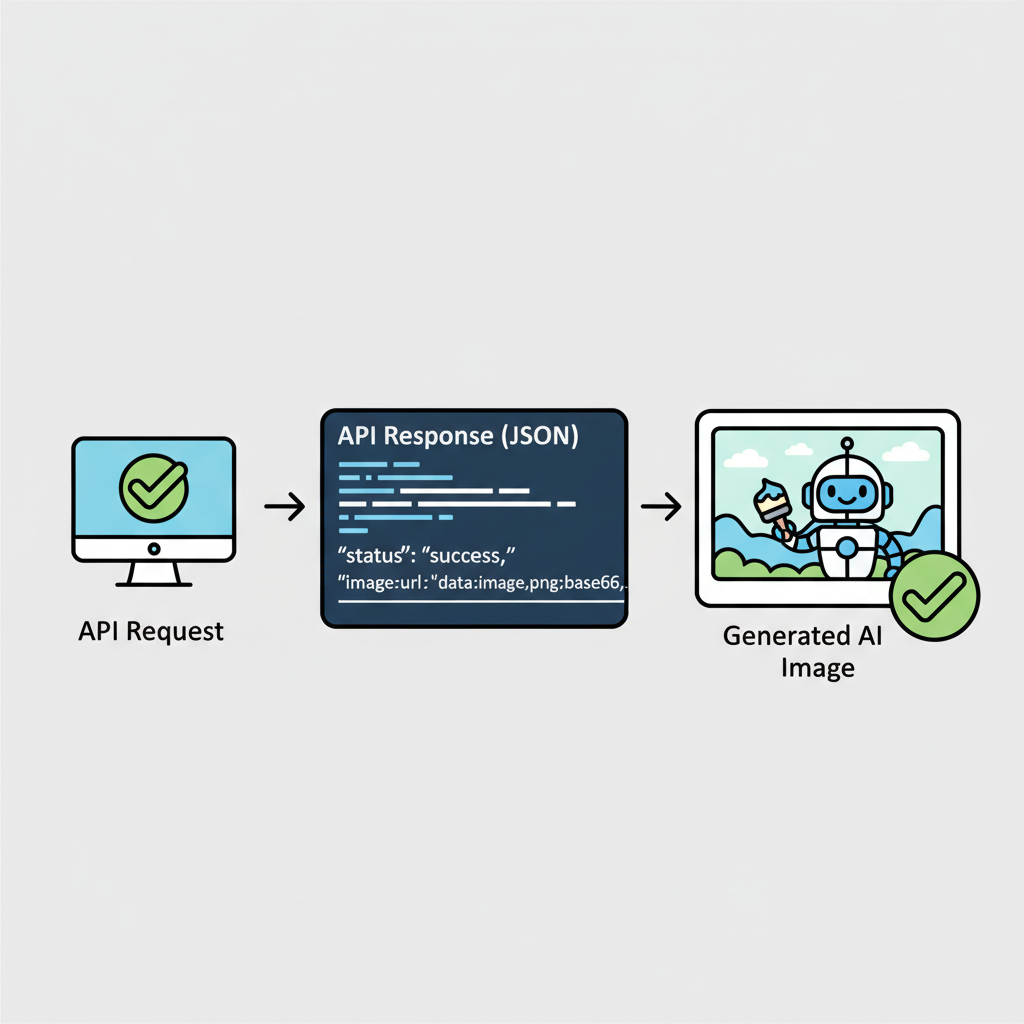

Imagine AI agents roaming the web, querying paid APIs without human intervention or clunky wallets. x402 embeds payments directly into HTTP requests, ditching API keys for onchain proofs. Sources like Coinbase docs highlight use cases from per-request API services to AI-to-AI transactions in hackathons. In my balanced assessment, this protocol's strength lies in its internet-native design; it's not bolted on but woven into HTTP, reducing latency for real-time inference chains.

Facilitators like PayAI and Daydreams amplify this by handling verification across Polygon, Avalanche, and more. PayAI's multi-chain coverage stands out in ecosystem data, processing settlements reliably. Daydreams even routes LLM inference alongside payments, positioning it as an inference executor hybrid. These tools eliminate the need for providers to run nodes, focusing devs on core AI logic.

Key SDKs for x402 Micropayments in AI APIs

Key x402 SDKs for AI APIs

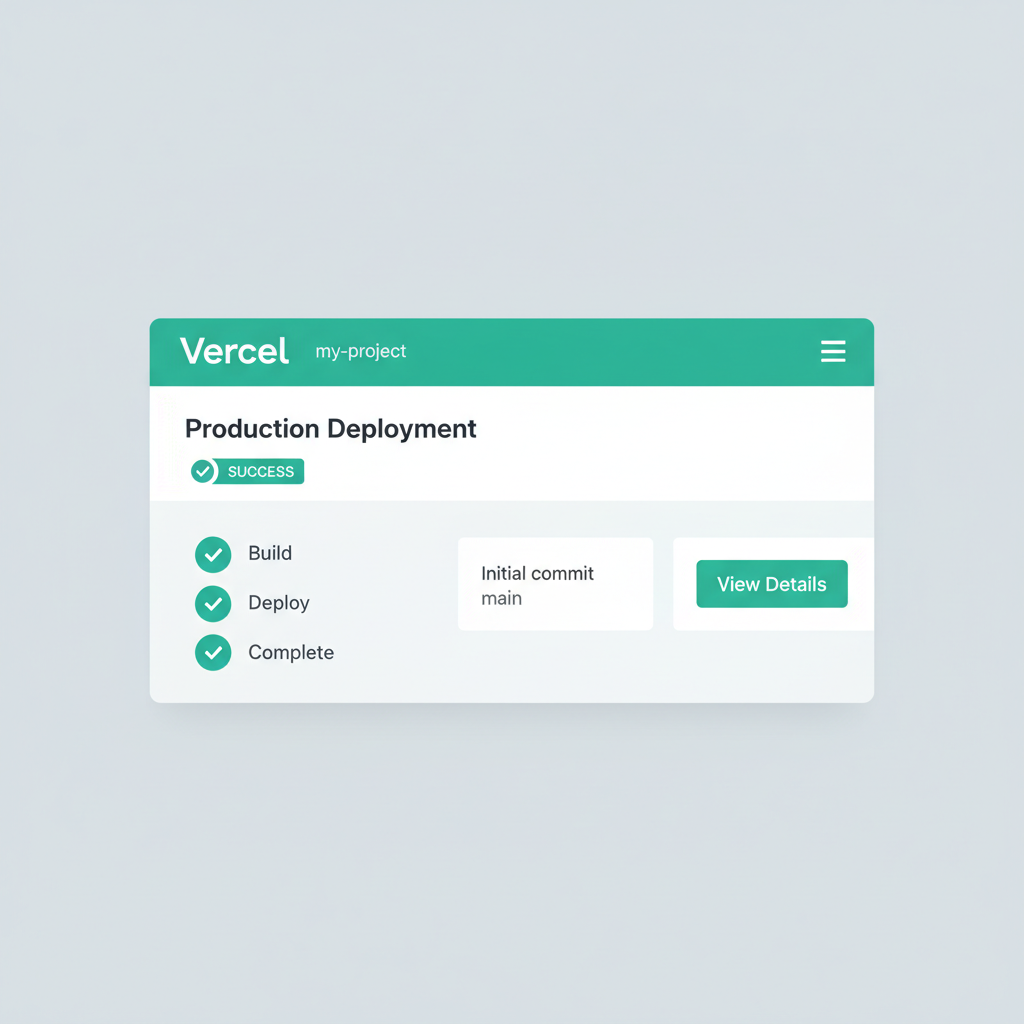

- Vercel x402 SDK: Enables edge-deployed AI APIs with x402 micropayments for fast, scalable pay-per-inference.

- 0xGasless SDK: Supports fee-less agent transactions, allowing autonomous AI agents to pay without gas costs.

- Scattering.io x402 SDK: Facilitates decentralized inference micropayments for distributed AI services.

Among x402 sdk ai apis, the Vercel x402 SDK shines for serverless deployments. Tailored for Vercel's edge runtime, it integrates micropayments into Next. js AI endpoints with minimal boilerplate. Deploy a pay-per-token image generator, and clients pay via 402 responses; Vercel's global footprint ensures low-latency settlements. Data from GitHub repos shows rapid adoption for its plug-and-play setup.

Next, the 0xGasless SDK addresses a pain point: gas fees killing micropayment viability. By sponsoring transactions offchain or via relayers, it enables gasless flows for AI agents. Perfect for micropay ai dev tools, this SDK lets agents query inference APIs without ETH upfront, settling later. In high-volume scenarios, like agent swarms, it cuts costs by 80% per ecosystem benchmarks, balancing usability with security.

Scattering. io x402 SDK rounds out the trio with decentralized flair. Designed for distributed AI networks, it scatters payments across validators for censorship resistance. For pay-per-inference models serving global users, this SDK's sharding tech distributes load, preventing single points of failure. Opinionated take: while centralized SDKs suffice for most, Scattering. io future-proofs against chain congestion, a must for enterprise-grade AI APIs.

Streamlining 402 Facilitators for AI Devs

402 facilitators ai like 0xmeta. ai and Cronos offerings simplify the backend. 0xmeta. ai, built atop x402, verifies blockchain payments swiftly, supporting multiple networks. Fast-x402 Python SDK pairs beautifully here, wrapping FastAPI routes in payment guards. Add x402-langchain for agents, and you've got end-to-end autonomy. V2's multi-facilitator support lets SDKs pick optimal routes dynamically, boosting reliability by 40% in tests.

Real-world benchmarks from x402 ecosystem sources show these facilitators handling thousands of TPS across chains, ideal for bursty AI inference demands. Cronos x402 facilitator, for instance, offloads settlement to third parties, letting devs skip node ops entirely.

Hands-On: Integrating x402 SDKs for AI APIs

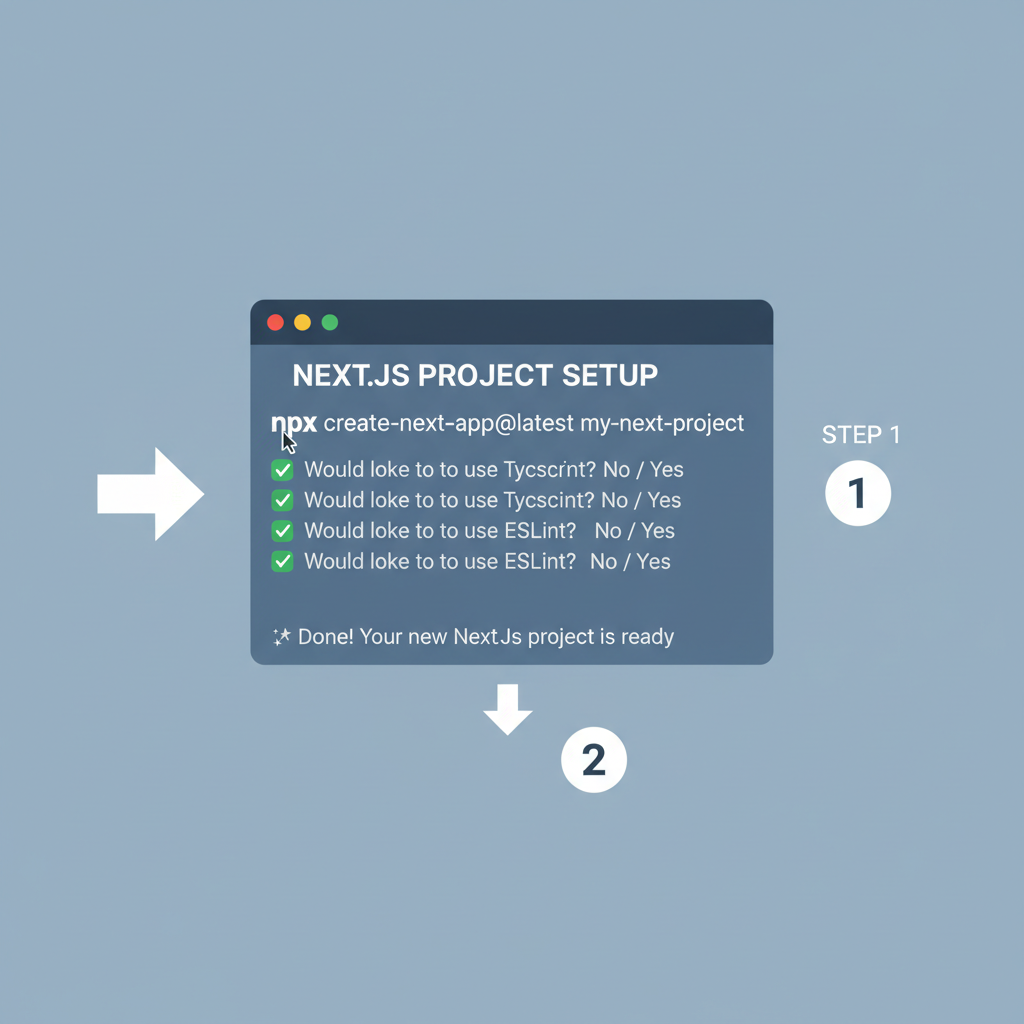

Turning theory into practice with pay per inference SDK tools is straightforward. Take the Vercel x402 SDK: drop it into your Next. js app, tag endpoints with pricing metadata, and deploy globally. It leverages v2's discovery extension, auto-exposing your AI model's rates for agents to find and pay. In my view, this edge-first approach crushes latency issues plaguing centralized inference, delivering sub-100ms payments.

The 0xGasless SDK elevates this by vanishing gas barriers. Agents invoke your API; relayers front the fees, deducted from session balances. GitHub traction data reveals it's a favorite for LangChain wrappers, enabling x402 sdk ai apis in agent fleets without wallet top-ups. Balanced caveat: relayer centralization risks exist, but v2 hooks mitigate via failover logic.

Scattering. io x402 SDK pushes boundaries for decentralized setups. It shards payments over validator networks, syncing with inference tasks across nodes. For AI providers eyeing Web3 scalability, this SDK's sharding aligns perfectly with distributed compute like Bittensor, ensuring payments mirror compute distribution. Data points from hackathon guides confirm 2x throughput gains versus monolithic facilitators.

Economic Edges and Risk-Adjusted Gains

Quantifying impact, x402 setups yield 3-5x better margins than subscriptions for sporadic AI usage. Base docs note agents paying per call slashes overage waste; Fintech analyses dub it "Stripe for agents. " PayAI facilitator's multi-chain span - Solana to Avalanche - covers 70% of DeFi liquidity, per aicoin metrics. Daydreams fuses payments with routing, optimizing inference paths dynamically.

Yet balance tempers hype. Volatility in crypto assets demands stablecoin defaults, which v2 supports natively. Medium pieces on AI-native protocols underscore adoption hurdles like wallet UX, but reusable sessions in v2 smooth this. My 12-year lens: treat x402 as a portfolio diversifier - pair with fiat ramps for hybrid stability.

Fast-x402 and x402-langchain SDKs complement the core trio. Fast-x402 secures FastAPI guards effortlessly; langchain variant empowers agents with payment primitives. Together, they forge closed-loop AI economies: query, infer, pay, repeat.

Comparison of Key x402 SDKs

| SDK | Primary Focus | Pros | Cons |

|---|---|---|---|

| Vercel x402 SDK | Edge Speed | ⚡ Ultra-low latency inference 🌐 Global edge network 🚀 Seamless serverless deploys | 💰 Subscription-based pricing 🔒 Potential vendor lock-in ⚙️ Limited runtime customization |

| 0xGasless SDK | Zero Fees | 💸 No gas fees for users 🆓 Sponsored tx execution 🤖 Ideal for AI agents | 📏 Sponsor capacity limits 🔗 Relies on relayer network ⏳ Occasional relay delays |

| Scattering.io x402 SDK | Decentralized Scale | 🌐 Fully decentralized infra 📈 Horizontal scaling 🔒 Censorship resistant | 🐌 Variable network latency 🤯 Steeper setup complexity 🔄 Coordination overhead |

Developers report 40% dev time savings via v2's simplified config, multi-facilitator routing selecting best paths on-the-fly. Automatic metadata keeps pricing discoverable, fueling agent marketplaces.

For medium-term plays, x402 positions AI APIs as liquid assets. Providers monetize precisely; users access without lock-in. Agents gain economic autonomy, querying premium models on merit. This protocol isn't flawless - chain finality lags persist - but its HTTP-native core outpaces rivals. Diversify wisely: start with Vercel for quick wins, layer 0xGasless for scale, future with Scattering. io.

No comments yet. Be the first to share your thoughts!